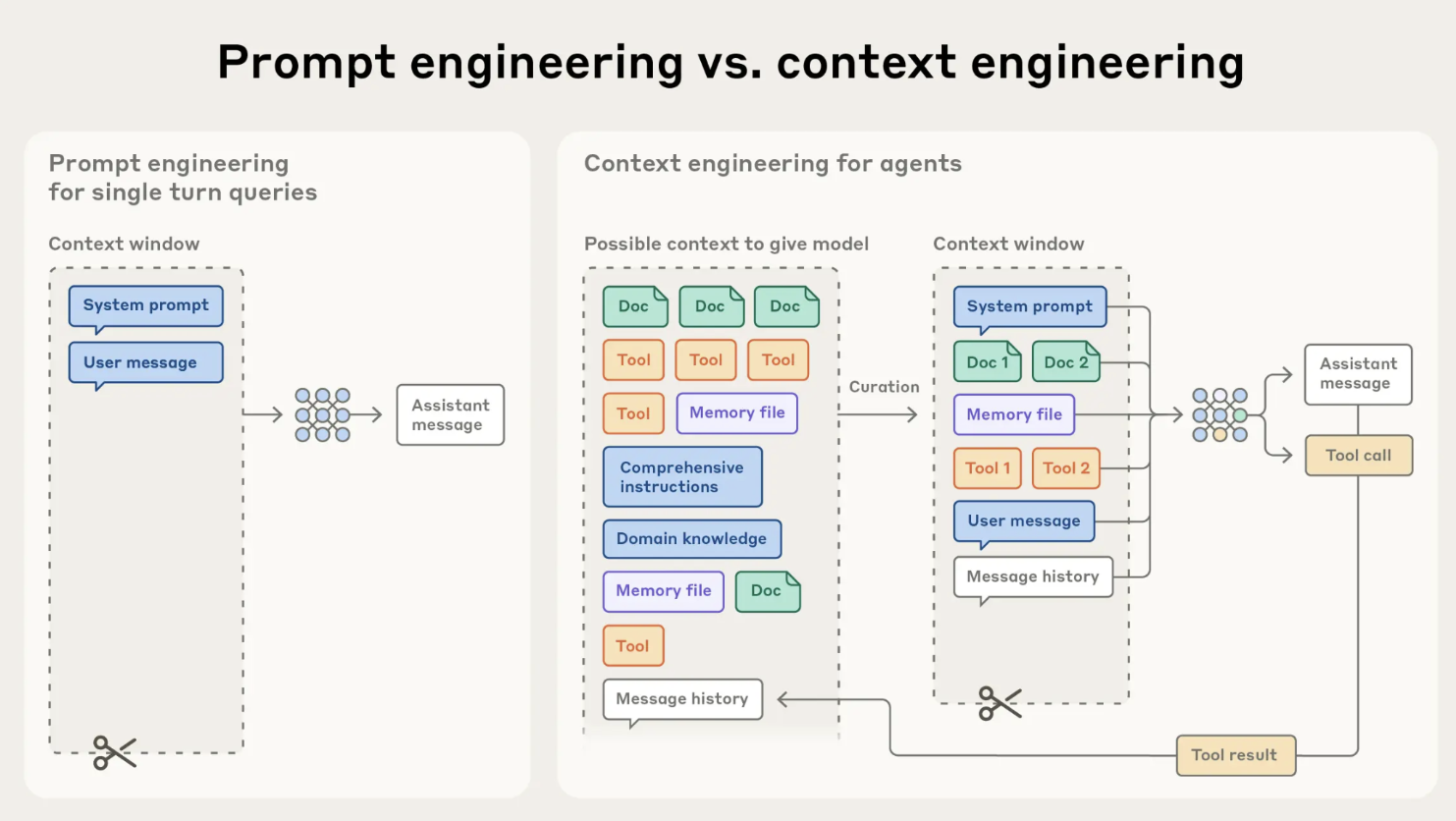

Context Engineering

Context is king. This is the most key part in getting your agent to behave as expected.What is context?It is the summary of the:

- System prompt

- User messages

- Agent messages

- Tool calls

- Tool call results

Long system prompts

Lost-in-the-middle

Almost all models recall best what is in the beginning and the end of the prompt, explicit, specific instructions in the middle are often forgotten.Many times I see Agent Engineers stuffing lines and lines of exactly-to-follow

instructions in the middle of the prompt. The model will not act on those.

Conflicting instructions

Putting conflicting instructions in the prompt is a sure way to get the model to fail. Read your prompt, you most likely wrote more than 2 lines referring to the same behavior. For example, out-of-scope instructions tend to be the most common in this case. The prompt will have like 3 definitions of what is out-of-scope and all of the definitions are conflicting - of course the agent is not going to know what to do.Agent messages count as context

Especially the long ones. If your agent responds in long responses for no reason, this fills up the context window making the model worse at its task.Degradation of performance

The more user messages, the more agent messages, the more tool calls, the more context the model has to work with, the worse it performs. Especially because the system window is slightly “forgotten” with each new turn.Tool calls

Tool calls are probably the most repeat offender here. So often I see tools returning so much data and the tool note being like “Use the first 2 lines, they are the most relevant”. Why stuff all this data into the context window? Don’t return raw error codes, data, or anything else to the model. Do return human readable text. The model was trained on text.Bad Example

Returns raw status code and redundant explanation:

Good Example

Returns clear, semantic result: