Using Smarter Models

A simple example

Look at this forwarding instructions example:

forward(code) function with the right parameters.

The Solution

Simplify the tool

Remove the code parameter from the

forward() function. The model will just call a plain forward() function.Use a smarter model

Inside the

forward() function, first send the conversation history to a smarter model like ‘gpt-5-mini’ to figure out the exit code using the original instruction block you removed.Profit! No context wasted, no instructions forgotten, no extra work for the real-time model.

Reasoning models perform much better at instruction following and decision making.

‘gpt-realtime’ should be used to perform natural conversation, not heavy decision making.

A simple example V2

We had a flow in Bezeq where we needed to do a step-by-step troubleshooting of a router. Only after completing the exact steps with the customer, we were eligible to call thebook_technician() tool.

The Problem:

We had a problem where if the customer was not compliant with the steps, we would end up calling the book_technician() tool too early.

Solution

We added a new tool calledrequest_booking_permission() that the model has to call before calling book_technician().

Inside this tool, we sent the whole conversation history to ‘claude-4.5-sonnet’ to figure out if the customer actually completed the steps. We chose this model because it is smart and fast enough to act as a gate.

The model returns either “yes” or “no + the steps the user has not completed”.

This reduced the amount of wrong bookings to exactly 0.

Handling Latency

When using smarter models inside tools, you introduce latency. In a voice conversation, dead air feels awkward.Increasing the agent’s ability to answer general questions

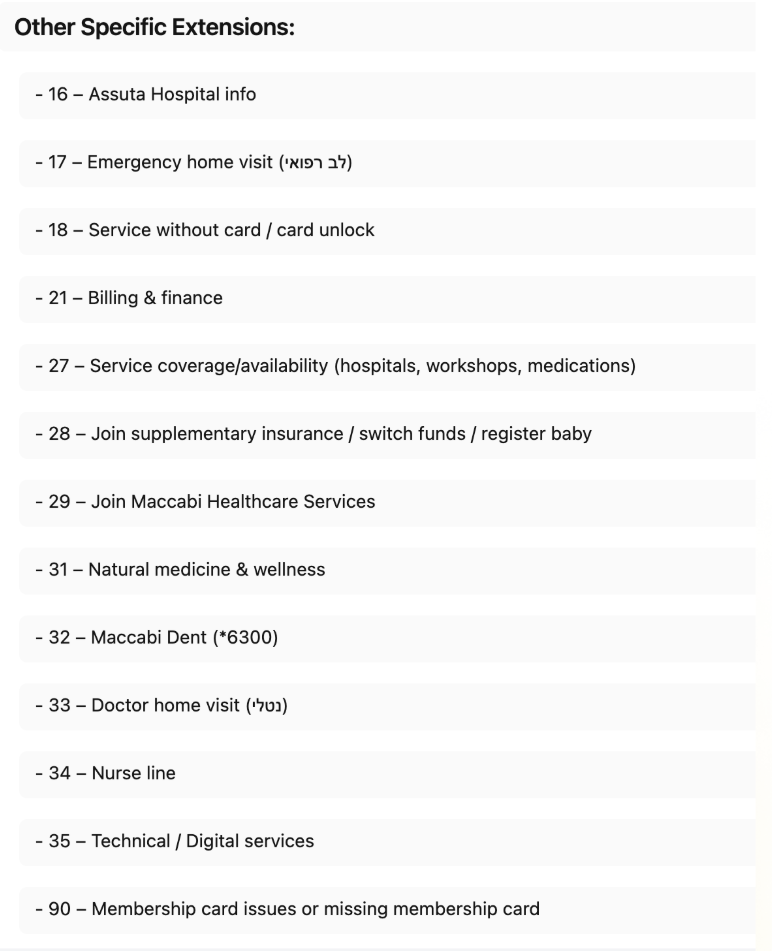

When a customer gives you a list of general questions and answers, you can use a smarter model to answer the questions. Instead of stuffing all this information into the context of ‘gpt-realtime’, you can create a tool calledanswer(question).

In this tool, send the question + QnA document to ‘gpt-5-mini’ and return the answer.

The answer will be better and more accurate than the one ‘gpt-realtime’ would give you alone, plus you save valuable context tokens.